Want to spot a deepfake? The eyes could be a giveaway

Reflections in the eyes of AI-generated images of people don’t always match up, researchers report.

A way from astronomy may would favor to display reflection distinctions in AI-generated americans’s eyeballs

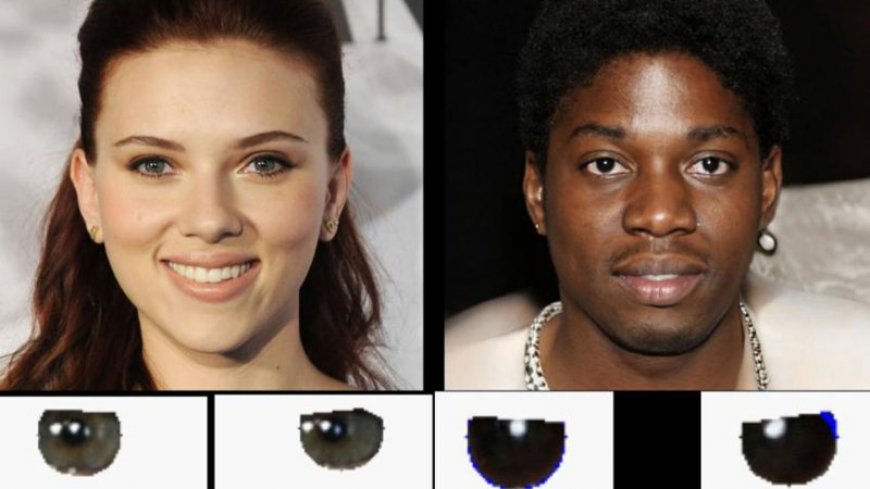

Reflections in the eyeballs of these images display that the one on the greatest is AI-generated, when the photo on the left is a just true photo (of the actress Scarlett Johansson).

Adejumoke Owolabi

Clues to deepfakes may smartly be in the eyes.

Researchers at the Tuition of Hull in England mentioned July 15 that eye reflections grant a potential due to the very truth of suss out AI-generated images of americans. The methodology relies on a mode additionally used by utilizing astronomers to examine galaxies.

In precisely true images, soft reflections in the eyeballs healthy up, exhibiting, as an illustration, the identical significant number of windows or ceiling lights. Alternatively in faux images, there’s commonly an inconsistency in the reflections. “The physics is unsuitable,” says Kevin Pimbblet, an observational astronomer who labored on the lookup with then–graduate student Adejumoke Owolabi and offered the findings at the Royal Astronomical Society’s U.s. significant Astronomy Meeting in Hull.

To purpose the comparisons, the group first used a workstation program to detect the reflections after which used these reflections’ pixel values, which signify the intensity of sunshine at a given pixel, to calculate what’s additionally is reasonable as the Gini index. Astronomers use the Gini index, initially developed to measure wealth inequality in a society, to attain how soft is disbursed for the time of an photo of a galaxy. If one pixel has each and each of the soft, the index is 1; if the soft is evenly disbursed for the time of pixels, the index is zero. This quantification helps astronomers classify galaxies into exceedingly a lot of sorts which involves spiral or elliptical.

Inside of the enterprise new work, the change in the Gini indices between the left and true eyeballs is the clue to the photo’s authenticity. For roughly 70 share of the faux images the researchers examined, this change grew to end as much as be a lot elevated than the change for just true images. In precisely true images, there tended to be no, or in the case of no, change.

“We can’t say that a explicit rate corresponds to fakery, alternatively we can say it’s indicative of there being an hassle, and per menace a optimistic man or girl should have a extra in-depth appear,” Pimbblet says.

He emphasizes that the manner, which may additionally work on videos, is simply not any silver bullet for detecting fakery (SN: Eight/14/18). A true photo can appear to be a faux, as an illustration, if the persona is blinking or if they're so in the case of the soft present that in simple terms one eye suggests the reflection. Alternatively the manner may smartly be section of a battery of tests — a minimum of till AI learns to get reflections true.

Further Tales from Science Recordsdata on Manufactured Intelligence

What's Your Reaction?